My Long Relationship with Dropbox

For basically as long as I’ve been doing my own software development, I’ve used “the cloud” to store a lot of my data. Specifically, I used Dropbox with the “packrat” option which backed up all of my data to the cloud and even kept track of per-file history. This worked very well – I stored all of my important information in my dropbox folder and when I got a new PC I would just sync from dropbox. When I was away from the PC, I could always access my important documents through the Dropbox App. Dropbox even gave me a reason to avoid learning how to use proper version control because I could just write code directly in Dropbox and let Packrat keep track of the rest.

The list of Dropbox pros is long – but after a decade of using it, I started to run into some problems:

- Removal of the “public” folder: For the first 5 years of using Dropbox, I made heavy use of the “Public” folder. This folder would allow you to share files with anyone on the internet. I personally used it as a sort of FTP server to host all of my software and updates. Once the public folder was removed, I migrated to a proper FTP server which was much harder to use and diminished the value of Dropbox’s offering.

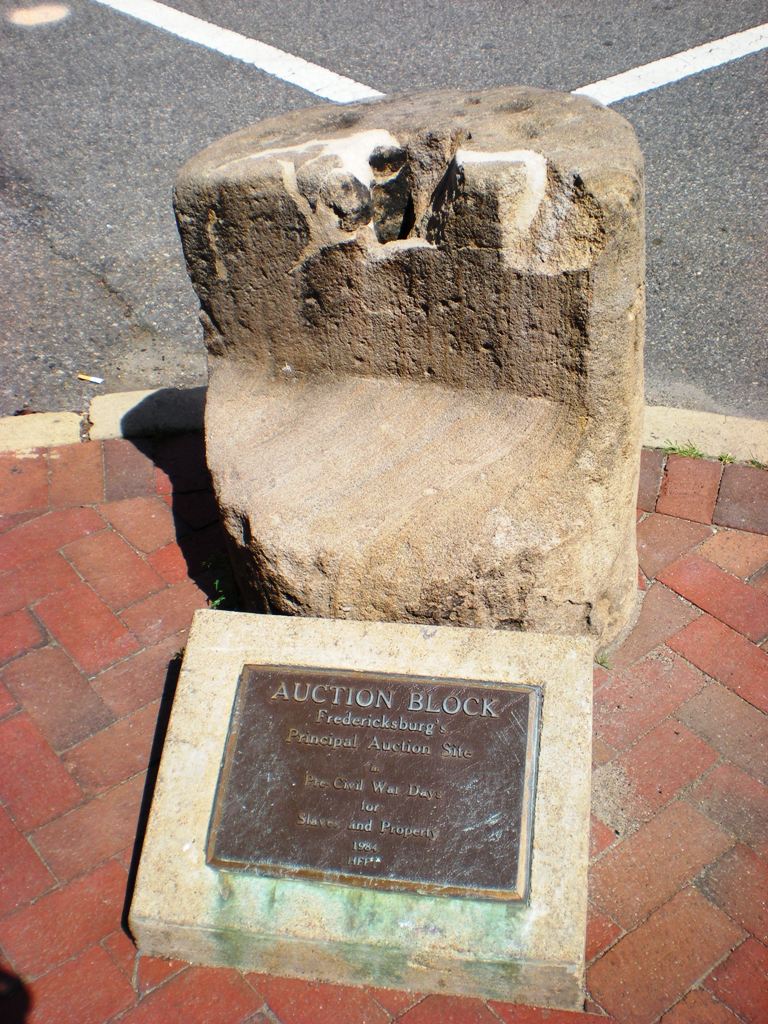

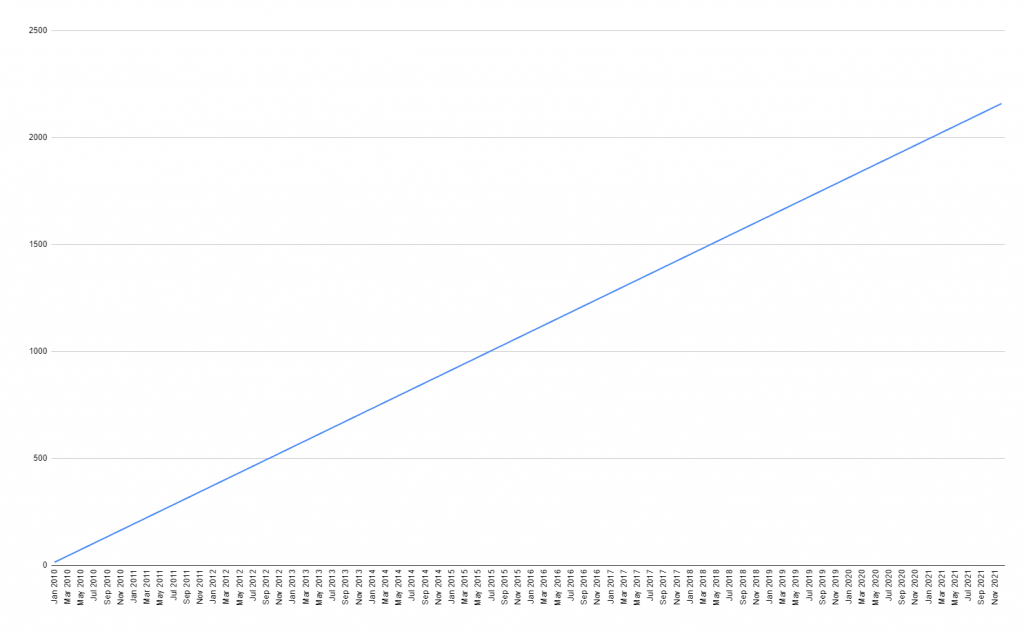

- Cost: With Packrat, I was paying $15 per month. In total, I’ve paid ~$1,800 to Dropbox to store my files for the past decade. This is a lot of money – and it’s always been worth it for me to not have to manage things myself, but it’s something to consider.

- Proliferation of Cloud Services: There are many cloud services available – and all seem to specialize in different things. Google Drive is great for photos and google docs, OneDrive is great for word documents, and Dropbox is great for everything else. Using all of these services meant that my files were never in one place. This isn’t a Dropbox problem – this is more of a me problem – but it was a problem nonetheless.

- Security: Finally, Dropbox is nice for things that aren’t very private; but I found myself storing passwords and other important information in my Dropbox for lack of a more secure solution. Not good.

The Synology 920+

The issues with Dropbox nagged at me for a while, but I didn’t do anything about it until Black Friday of 2021 when I purchased a Synology 920+ with 4 Seagate Ironwolf 4TB drives on a whim. I didn’t have a plan – but I had the vague idea that I’d get rid of my Dropbox subscription entirely.

And that’s exactly what I did. The software that comes with the NAS is pretty nice (Synology DSM), so I was able to quickly setup RAID on my drives with single drive fault tolerance and began migrating Google Photos to Synology Photos, my media off of my desktop drive and only the Synology (to be consumed with Jellyfin), and my Google Drive and OneDrive files migrated.

The migration went smoothly, but was basically an archaeological dig through all of my old stuff. I found old screenshots (embarrassing), gamesaves (impressive), and plenty of old schoolwork that I didn’t care about across the 12 harddrives that I had lying around.

I haven’t thought through a proper full review of the 920+, but I’ve been pretty happy with it. The stock fans are a little noisy (fixable, but “voids the warranty”), the stock RAM is not quite enough (fixable, but “voids the warranty” if you buy RAM that’s not Synology branded), and the plastic case leaves a bit to be desired. But functionality wise, I’m happy with it and I’m also happy that I got an “out of the box” software experience and have no need to go digging for other software to manage my photos and files.

Once the migration was complete, I was able to cancel my Dropbox subscription. In all, I paid $1,115 for the hardware which is quite the lump sum (that would have paid for 6 years of Dropbox!) – but I now have 5 times the raw storage and more flexibility to store whatever I want with the peace of mind that it’s not being stored on someone else’s server.

It’s the homelab bug

But my adventure did not stop with buying a Synology. As soon as I got my files switched over to the Synology, I decided I wanted to use the built in Docker features to manage Minecraft, Valheim, and Jellyfin servers – but I soon found that running all 3 of these at once wasn’t a challenge that the 920+ was particularly equipped for…

So I ended up building another system to serve as my “compute” and relegated the Synology to be just storage (I’m hoping to have another post on that “other” system later).

And now that I had these two servers, I needed to buy a fancy network switch to hook them together and then buy a fancy network router to hook to the switch and then fancy APs to enable my wifi…

And even now, I have the aching feeling that running services (eg Photos) from the Synology is not a great idea – instead, I should move the Photos app to the compute layer. And once I do that, I’ll probably need to get a few more servers to ensure high availability for the apps I run.

Oh, what have I done?

The elephant in the room: backups

One of the major selling points of getting a Synology is eliminating the costly monthly bills that you have to pay to cloud providers. The elephant in the room with my new “homelab” is offsite backups which I am currently using Backblaze B2 to achieve. Backblaze B2 requires a monthly subscription, however, so I’m back to where I started: paying a monthly subscription for cloud services.

That said, the monthly subscription is cheaper and I only use it for automated backups (haven’t had to recover yet!).

So what’s the takeaway?

The pessimistic takeaway is that I’ve spent a lot of time and money building Cloud services myself. Besides security, I don’t think there are any actual tangible benefits to doing this – and I wouldn’t recommend it to folks who are looking for storage of a few terabytes.

I’d only recommend this if you have huge amounts of data or if you’re doing it for fun. I guess in my case, I’m having “fun”.