Homelab Overview

As we go into 2023, I thought that I would share the layering of my homelab – which I have spent admittedly far too much time on. In general, I spend a lot of time dealing with complex systems during my dayjob. When I’m at home, I try to keep my systems as simple as possible so that I don’t have to spend hours re-learning tooling and debugging when things go wrong. Even if this costs some extra money, it’s worth it because it saves time.

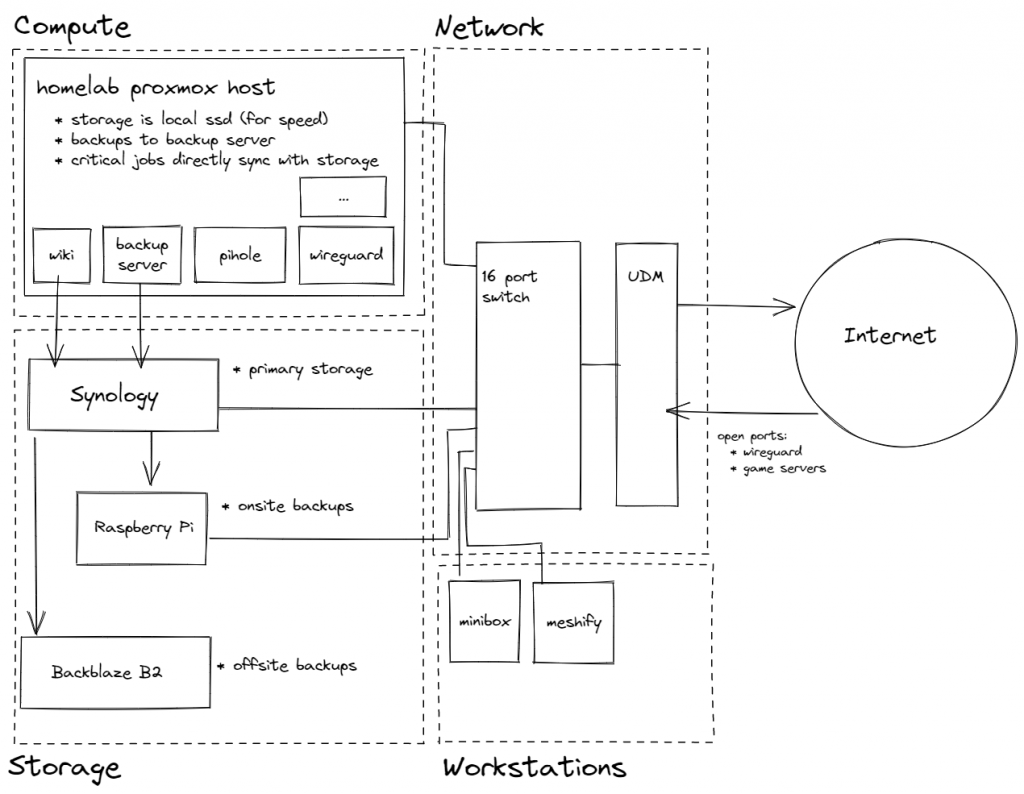

My “homelab” is split into four layers: workstations (actual computers on desks), compute (servers which run applications), storage (server which hosts disks), network (routers and switches).

If I try to get more rigorous about the definitions, I arrive at:

- Workstations are the client-side interfaces to storage/compute which also have local storage and compute to host games and other local processing (eg photo editing, video editing, word processing, etc). These workstations are backed up to the Storage layer regularly.

- Compute is the server-side hosting infrastructure. All applications run on “compute”. The compute should probably be using the storage layer as its primary storage, but due to speed considerations (game servers), the compute layer uses its own local storage and backs up regularly to the storage layer.

- Storage is the server-side storage infrastructure. All storage applications (NFS, SMB) are hosted on storage as well as some other applications which should probably be moved to compute, but are currently on storage due to a lack of research (eg Synology Photos, Synology Drive).

- Network is the network backbone which connects the workstations/compute/storage and routes to the Internet. My network currently supports 1GBPS.

In my homelab, I’ve decided to split each layer into separate machines just to make my life easier. Workstations are physical computers on desks (eg Minibox or brandon-meshify), Compute is a single machine in my closet, Storage is a combination of a Synology 920+ (for usage) and a Synology 220+ (for onsite backups of the 920+), and Network is a combination of Unifi hardware (Dream Machine Pro, Switch16, 2 APs).

It’s definitely possible to combine this layering – I could imagine a server which performs both compute and storage or maybe a mega-server which performs compute and storage as well as networking. But for my own sanity and for some extra reliability, I’m choosing to host each layer separately.

Dependencies Between Layers

Furthermore, each layer can only depend on the layers below it.

- The network is the most critical layer and every other layer depends on the network. In cases where the network must depend on other layers, workarounds must be present/documented.

- Storage depends on the network, but otherwise has no dependencies. If storage is down, we can expect compute and workstations to not operate as expected.

- Compute depends on storage and network; however, since the compute layer is by definition where applications should be hosted, there are a few instances of applications which lower layers might depend on.

- Workstations are the highest layer and depend on all other layers in the stack.

Again, I have these rules to keep the architecture simple for my own sanity. If my NAS goes down, for example, I can expect basically everything to break (except the network!). I’m okay with that because creating workarounds is just too complicated (but definitely something I would do in my dayjob).

As I mentioned in the bullet points; there are some exceptions.

- The compute layer runs pi-hole to perform DNS-level adblocking for the entire network. For this to work, the IP Address of pi-hole is advertised as the primary DNS server when connecting to my home network. Unfortunately, this means that if the compute layer is down, clients won’t be able to resolve IP addresses; I need to manually adjust my router settings and change the advertised DNS Server.

- The compute layer runs Wireguard to allow me to connect to my network from the outside world. However, if the compute layer is down then Wireguard goes down which means that I won’t be able to repair the compute layer from a remote connection. As a backup, I also have a L2TP VPN enabled on my router. Ideally, my router would be running a Wireguard server since Wireguard is awesome – but the Unifi Dream Machine Pro doesn’t support that natively.

- My brain depends on the compute layer because that’s where I host wikijs. If the compute layer goes down, my wiki becomes inaccessible (that’s also where I have wiki pages telling me how to fix things). To mitigate this, I use wikijs’s built in functionality to sync articles to disk and I sync them directly to the storage layer. If compute goes down, I should be able to access the articles directly from storage (although probably with broken hyperlinks).

My Fears

Overall, I’m happy with this homelab setup and it’s been serving me well for the past year. I’m a pretty basic homelabber and my homelab isn’t even close to the complex setups I see on YouTube and Reddit. My homelab is running a few game servers (Valheim, Ark, Minecraft), Media Hosting (Jellyfin), and some utilities (pi-hole, wikijs) all under proxmox. This is not nearly as crazy as the folks who have tons of security cameras, the *arr stack to automagically and totally legally grab media on demand, or folks who use their homelab to perform their actual compute-heavy dayjobs.

That said, I’m still worried about the lack of general high-availability in my homelab. For example: if my single UPS for all layers dies during a power outage, if my single compute host dies, if any of my networking equipment die. Any of these issues will knock out my homelab for several days while I wait for replacement hardware to arrive.

But then again, that’s probably okay – having multiple compute nodes or multiple network nodes is a huge added expense with perhaps minimal upsides. After all, I’m not running 24/7 services for customers – it’s just me and my family.